Developing and Improving Modified Achievement Level Descriptors: Rationale, Procedures, and ToolsRachel Quenemoen • Debra Albus • Chris Rogers • Sheryl Lazarus June 2010 All rights reserved. Any or all portions of this document may be reproduced and distributed without prior permission, provided the source is cited as: Quenemoen, R., Albus, D., Rogers, C., & Lazarus, S. (2010). Developing and improving modified achievement level descriptors: Rationale, procedures, and tools. Minneapolis, MN: University of Minnesota, National Center on Educational Outcomes Table of Contents

IntroductionSome states are developing alternate assessments based on modified achievement standards (AA-MAS) to measure the academic achievement of some students with disabilities (Albus, Lazarus, Thurlow, & Cormier, 2009; Lazarus, Thurlow, Christensen, & Cormier, 2007). These assessments measure the same content as the general assessment for a given grade-level, but the AA-MAS may have different expectations of content mastery than the general assessment, according to federal regulations and guidance. The United States Department of Education’s Non-regulatory Guidance (2007b) for AA-MAS states: This assessment is based on modified academic achievement standards that cover the same grade-level content as the general assessment. The expectations of content mastery are modified, not the grade-level content standards themselves. The requirement that modified academic achievement standards be aligned with grade-level content standards is important; in order for these students to have an opportunity to achieve at grade level, they must have access to and instruction in grade-level content. (p. 9) State policymakers have struggled to understand the underlying educational logic of the distinctions of the same grade-level content but different expectations of content mastery. Filbin (2008) described content alignment issues as one of the primary challenges for the first six states that submitted their AA-MAS for PeerReviewunderthe2001Elementaryand Secondary Education Act (ESEA) requirements. She found that it is challenging to design an assessment based on grade-level content standards that is of an appropriate difficulty and complexity for this population, based on peer review analyses. Since that first review, special education, curriculum, and measurement experts have posed several questions related to the nature of the distinctions between content coverage and difficulty or complexity (Perie, 2009a). A key to understanding the relationship of content and difficulty underlying a standards-based test is in the standards themselves. In a standards-based assessment, and specifically in a test that is defined as having “modified achievement standards,” these standards should communicate what kind of performance on which content targets demonstrates acceptable achievement. A standards-based test requires clear definitions of the content being assessed—in relation to articulated content standards—as well as definitions of “how well” students need to perform on the content to be considered proficient—or performance standards. These descriptions are included in the process of standard-setting on a standards-based test. Standards-based reform has resulted in increased attention to performance standards (Cizek, 2006; Crane & Winter, 2006; Haertel, 2008; Hambleton, 2001; Perie, 2009b; Zieky, Perie, & Livingston, 2008). In 2003, the Council of Chief State School Officers took a broad approach to the definition, defining performance standards as: Indices of qualities that specify how adept or competent a student demonstration must be and that consist of the following four components: (1) levels that provide descriptive labels or narratives for student performance (i.e., advanced, proficient, etc.); (2)descriptions of what students at each level must demonstrate relative to the tasks; (3) examples of student work at each level illustrating the range of performance within each level; and (4) cut scores clearly separating each performance level. (p. 10) It is the second component of performance standards—the descriptions of what students must demonstrate on the assessment—that we address here. Although measurement experts have referred to the four components together as performance standards, and the descriptions of student performance as performance level descriptors (PLDs), ESEA 2001 and IDEA 2004 refer to them as “achievement standards.” The AA-MAS gets its name from that statutory language. Given that we are focusing on the AA-MAS, the term we use in this paper is achievement standards, and we specifically refer to the second component described in the CCSSO definition of these achievement standards as achievement level descriptors (ALDs).

The purpose of this paper is to provide a rationale, procedures, and tools to develop and continuously improve AA-MAS ALDs. As states make decisions on whether and how to develop an AA-MAS, they will also be developing a defense of the choices they make. Filben (2008) documented the early peer review process and outcomes and it is clear that choices made must be built on a complex educational logic reflecting content coverage, complexity, and the characteristics of the potential participants. In this paper, we propose a process to guide state work so that stakeholders and policymakers can articulate, from the very beginning, the educational rationale for their choices and the implications of this rationale for the specific design choices they make related to their ALDs. By building on this rationale, involving key policymakers and stakeholders through a systematic process to articulate the underlying logic, and documenting how this logic has influenced state choices using the tools and templates provided, states will have compelling evidence for peer review defense. More importantly, they will have confidence in the educational implications of the choices for students and schools in their state.

Although ALDs from four states were used to develop the paper, our comparison of these states’ general assessment and AA-MAS ALDs is not meant to make judgments on the quality of each state’s work. Instead, our comparative examples from these states are used to develop and test the rationale, procedures, and tools we provide for states to use as they develop and evaluate their ALDs for AA-MAS in relation to the general assessment. These four ground-breaking states developed ALDs prior to the release of final regulations or to the policy discussion that surrounded the regulations. We recognize these states for their work and realize that they did not design their AA-MAS ALDs for this type of scrutiny. Still, we believe they have provided a great service to states that follow by demonstrating how states may consider the characteristics of modified achievement standards, and over time, the field will have a better understanding of the educational logic inherent in these tests. It should be noted that this paper is based on considerations of best practice, and it does not attempt to present an authoritative interpretation of federal policies related to AA-MAS. The processes and tools described in this paper are not necessarily endorsed by the federal government, but they may be helpful to states in meeting federal requirements related to AA-MAS.

Background and Selected Literature for Policymakers and Stakeholders Achievement level descriptors for a standards-based assessment reflect both the content assessed and the challenge or difficulty of the assessment. ALDs describe how different performance levels on a test reflect specific skills and knowledge in the content being assessed. They are important for that reason—it is where teachers, parents, and the public should be able to learn not only what a student should know and do to be proficient, but how well they should do it. In addition, because the ALDs describe how one level of achievement differs from another, they show which specific content, skills, or knowledge are the next steps in learning. As such, the ALDs can be powerful policy statements and often serve as the only source where content and achievement expectations for students are specifically written down in concise terms. The choices states make about how the achievement standards differ between the general assessment and the AA-MAS reflect an educational logic of sorts, whether or not test developers have formally articulated the logic. In theory, in a comprehensive assessment system like those developed under current ESEA requirements, states that are developing AA-MAS should determine whether the AA-MAS leads logically to other achievement standards within the assessment system, for example, to grade-level achievement standards (GLAS) or to alternate achievement standards (AAS), or if they stand-alone and are disconnected. Those discussions should then guide development of ALDs for each test. States will vary on these decisions. Perie, Hess, and Gong (2008) have suggested that in some states, the early AA-MAS ALDs and items reflected added supports and scaffolding but the content coverage was the same as the general assessment. In other states, the AA-MAS ALDs and items reflected content knowledge and skills that were different from the general assessment. As the regulatory language refined state understanding of the need for the same content coverage as the general assessment, content differences have been minimized in most states approaches. Based on regulatory language (USED, 2007a) and guidance (USED, 2007b), the comparative status of the AA-MAS to the general assessment as the same content but different expectations of mastery should be reflected in the language of each test’s ALDs. That is, the ALDs of the two tests should be comparable in terms of content coverage by grade but reflect less challenging attainment of the content for similar performance levels, such as proficiency on the general assessment in comparison to proficiency on the AA-MAS. Less challenging achievement standards may be defined in one or more of several ways by varying several conditions. For example, Perie (2009b) suggests that the descriptors can vary in these ways: (1) reducing the cognitive complexity of the required skill, (2) decreasing the number of elements required, or (3) adding appropriate supports and scaffolds to the description of the knowledge and skills required. Further, she suggests that some combination of the options can be used: In practice, those drafting the modified achievement level descriptors could choose to adopt more than one of these strategies. That is, they could choose to reduce the depth of knowledge required for proficiency on some of the skills, add scaffolds to the statements about other skills, and provide specific examples to others indicating that the student is required to perform a narrower range of tasks than what is required in the grade-level achievement standards. (pp. 244-245) ALDs are not always developed prior to test development. Measurement experts disagree on whether they should be drafted to guide test development or determined statistically later by difficulty of items and cut scores (Perie, 2009b). For these initial states, whether they developed them first or statistically after the fact, there should be a noticeable logic underlying the content differences if the test is to achieve the apparent intent of the regulations. Because the “proficient” level has primary importance in current standards-based accountability designs, ALDs describing the proficient level would arguably be the most promising of the levels to detect the underlying differences and assumptions between general and modified ALDs. Thus, we have limited our analysis to comparing ALDs at the “proficient” level in development of the following tools and procedures. By comparing and contrasting how states describe “proficiency” for the general assessment and the AA-MAS, we were able to identify patterns of variation between them, and assign category names to the patterns for easier analysis. We also identified procedures to make the comparisons more efficient and visible. These categories and procedures were formatted into analyses worksheets and were field-tested on the initial state examples. Practitioners, researchers, and other interested stakeholders can use these tools—the category names and procedures—in development of new ALDs or evaluation of existing ALDs.

Top of page | Table of Contents Methods Used to Develop the ToolsCollection of achievement level descriptors from state Web sites was completed in early 2009. The collection included only those states that had both general and AA-MAS ALDs for the proficient level available online for reading and math, at grades 4, 8, and 10. This process resulted in ALDs from four states which were then used to develop and test the tools. Appendix A provides side-by-side ALD texts taken from the full document versions of ALDs posted online for each state.

Category Names for Comparing and Contrasting ALDs In this report, we demonstrate processes and tools to help build a defense of state choices for AA-MAS. We compare and contrast ALDs for the general assessments and the AA-MAS. We have not included a comparison of each state’s content standards, and have tried to avoid the use of terms associated with each of the most widely used alignment methodologies. Although the ALDs reflect the content standards and are often considered in alignment studies, the terms used in alignment methodologies have specific and complex meanings that are inherent to each of the approaches (Porter & Smithson, 2002; Rothman, Slattery, Vranek, & Resnick, 2002; Wakeman, Flowers, & Browder, 2007; Webb, 1999). Instead, we used more generic terms that can be tailored to a specific setting, as appropriate, as test developers or policymakers work to improve the quality of their ALDs. For example, rather than using terms like “cognitive complexity” or “depth of knowledge,” we used categories of “content” (what), “application” (how), and “degree” (how well). Rather than using a term like “scaffolding,” we chose the general category of “context” (under what conditions). These categories and their definitions are shown in Table 1. Researchers or practitioners who use this approach to compare and contrast ALDs on specific assessments can refine these coding categories consistent with the terminology used in test development and alignment studies in their state. For example, as the tools are tailored to state use by state staff or facilitators, additional terms or clarifications for each category could include for example the term “frequency” or “how often or consistently” in the definition of degree. This comparative analysis tool is simply a tool, and can be amended to better match existing policy and practice choices. Table 1. Categories Used for Comparing and Contrasting ALDs in Tool Development

To test our categories, two project researchers coded all achievement descriptors for each state’s general assessment and AA-MAS. After they independently coded text for the proficient levels, the results were compared and any disagreements were discussed and resolved. Remaining questions or discrepancies were brought to a third project staff person for resolution. There were relatively few areas for resolution, and in all cases, were recorded as decisions rules. See Appendix B for decision rules developed during the process of applying the coding categories, along with other questions and issues identified by research staff. When the tool is used by states, similar notes on decision rules, questions, and issues should be identified to flag areas for further discussion and clarification. After the initial coding and resolution was completed, the preliminary comparisons were presented to members of a project expert panel (measurement, content, and special education experts) for validation of the process. The expert panel indicated that the categories for coding could be helpful to the field, and endorsed the procedures as useful for both researchers and for practitioners.

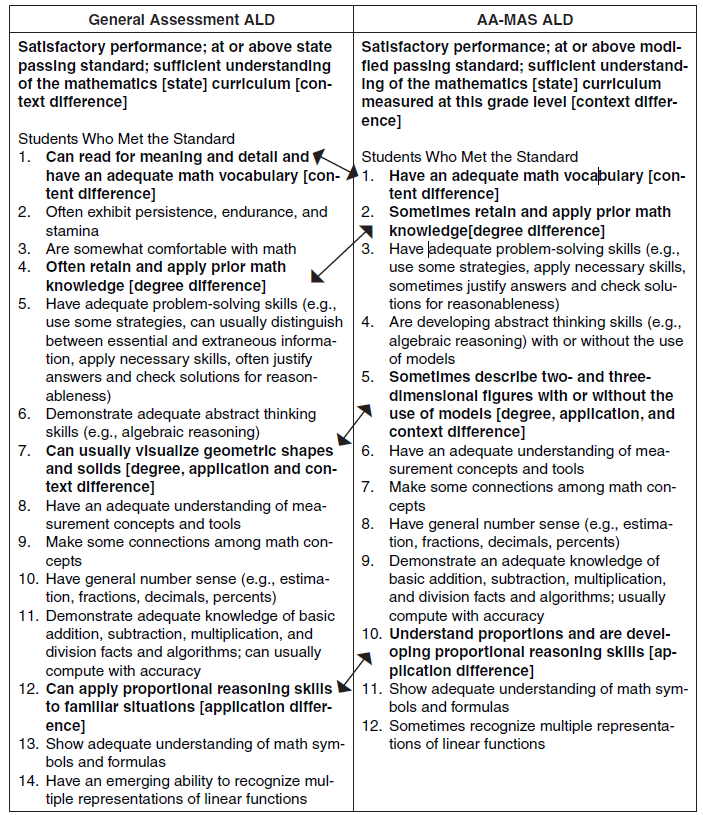

Coding Category Examples from State ALDs for General Assessments and AA-MAS When coding differences in ALDs, project staff looked at the sets of ALDs side by side, as shown in Table 2. Staff members then determined whether each difference was a content difference, an application difference, a degree difference, a context differences, or multiple differences. Full texts are provided in Appendix A, first in original form and then in coded form. Appendix B provides additional information on how decisions were made for coding. Examples of each type of difference are presented in Table 2 in bold within the listed descriptors. The difference categories are more fully described in Tables 3 through 7. Only one example of each coding category is shown in Table 2; others were identified in the actual analyses. Table 2. Examples of Difference Categories in Original Text Samples for the General

Note: Bolded words indicate a substantive difference. An example of a content difference is presented in Table 3. Content difference is defined as “what is to be known by the student.” These texts were coded as a content difference because the general ALD mentions that the student will be able to read for meaning and detail as well as have an adequate math vocabulary and the AA-MAS ALD only mentions having an adequate math vocabulary. Table 3. Coding Example: Content Difference in ALDs for the General Assessment and AAMAS Grade 8 Mathematics at “Meets Standard” Level for State 1

See Table 2 for source of example. Table 4 shows an example of an application difference. Application difference is defined as “how the student uses the content.” The general version states that a student “can apply proportional reasoning skills to familiar situations” and the AA-MAS version says a student will “understand proportions.” Although the language is similar, the terminology suggests a difference in the application of skills. Table 4. Coding Example: Application Difference in ALDs for the General Assessment and AAMAS Grade 8 Mathematics at “Meets Standard” Level for State 1

See Table 2 for source of example. The third coding category, presented in Table 5, shows a degree difference. Degree difference is defined as “how well or how much is to be known by the student.” The general ALD says the student will “often retain and apply prior math knowledge” and the AA-MAS ALD says the student will “sometimes retain and apply prior math knowledge.” So, the difference described is about the degree or frequency that a student retains and applies prior math knowledge. Table 5. Coding Example: Degree Difference in ALDs for the General Assessment and AA-MAS Grade 8 Mathematics at “Meets Standard” Level for State 1

See Table 2 for source of example. The fourth coding example, in Table 6, shows context differences. Context difference is defined as “under what conditions the student demonstrates the content.” In this example, one of the contextual differences is found in the addition of language for the AA-MAS ALD on the right. It repeats the same language of the general ALD but adjusts and adds language that sets apart the skills being described to the different context of the “modified passing standard…measured at this grade level.” Table 6. Coding Example: Context Difference in ALDs for the General Assessment and AAMAS Grade 8 Mathematics at “Meets Standard” Level for State 1

See Table 2 for source of example.

ALDs often have multiple coding differences represented on one chunk of text. The final example from the Grade 8 Mathematics general assessment and AA-MAS (see Table 2) shows text that was coded as having three differences; in degree, application, and context (see Table 7). The degree difference was between “can usually” in the general ALD and “sometimes” in the AA-MAS. The application difference was between visualize in the general versus describe in the AA-MAS version. The context difference is shown in the AA-MAS ALD that allows for the student to use models that are not mentioned in the general ALD. Content was described differently for geometric shapes and solids and two and three dimensional figures, so it is unclear whether this is also a content difference. Table 7. Coding Example: Multiple Codes in ALDs for the General Assessment and AA-MAS Grade 8 Mathematics at “Meets Standard” Level for State 1

See Table 2 for source of example.

Clarification of Grade-Level Nuances Observed in Reading ALDs Reading ALDS were handled in the same way as mathematics ALDs, but there was an additional complexity to consider given the emphasis in the AA-MAS regulatory language on grade-level content coverage. The ALDs for reading need careful articulation of the nature of the passages used in any reading assessment in order to clarify the grade-level content coverage requirement. In the four states that we examined, it was not always clear what was intended. Because we did not study state content standards, it is possible that areas we saw as unclear are in fact specified in the grade level content definitions. In the example shown in Table 8, both the general and AA-MAS ALDs included the phrase “grade appropriate” in describing the reading materials. However, the state further specified how the material was different for the AA-MAS ALDs, describing it as having a reduced cognitive load on grade level in addition to having limited inferential processes and simplified sentence structure. We want to underscore the importance of states being explicit about what they mean by “on-grade level” when used in reading ALDs, and that it is important to be transparent in describing any difference of this type. In Table 8, we highlight the grade-level specific language and language describing text specific to a student’s IEP, even though in addition to specific grade-level references in both ALDs, the general ALD specifically notes independent reading and the addition of technical and persuasive text in comparison to the AA-MAS ALD. Table 8. Grade-level Complexities in Grade 8 Reading ALDs for the General Assessment and AA-MAS

Table A-5b, State 2 is the source of these examples.

Top of page | Table of Contents Procedures to Articulate the Educational Logic of ALDs for AA-MASWhen the achievement to be described for some students—for example, students with disabilities—differs in any way from what is expected for most students, then the developers have an obligation to state how it is different and a rationale for why those differences can promote positive outcomes. Then, a systematic process can be used to categorize the general assessment ALDs and identify specific changes that would support students with the specific needs and characteristics of the students who may participate in AA-MAS. This process can be done during the development of AA-MASALDs or it can be done to evaluate and improve existing AA-MAS ALDs. The procedures and tools presented in this paper provide ways to develop (or to check on existing) draft achievement level descriptors that reflect intended underlying assumptions. Based on our analyses of four states’ ALDs for the general assessment and AA-MAS, we concluded that it is possible to use these categorization procedures to articulate the educational logic for ALDs for AA-MAS. This logic should be built on a definition of who the students are who may benefit from participation in AA-MAS, and the specific needs and characteristics of these students that require a different approach to assessment than the general assessment. Four-Step Process for Use of Procedures and Tools The four-step process is described here. The process overview and tool templates are provided in Appendix C. Step 1 The first step is to identify and recruit key policymakers and stakeholders to participate in the process, and to whom background information and training will be provided to ensure a common understanding. Like other standard-setting procedures, the participants should include people with experience and expertise in the content and with the students, and their credentials should be documented. A common understanding of the purpose of the procedures should be developed. The background materials in this paper can be used as part of that training. The remaining steps involve these policymakers and stakeholders as part of a virtual or face-to-face group process, typically facilitated similar to other standard-setting procedures used in the state. Step 2 Once the participants are convened and trained, the second step is to work with them to identify the needs and characteristics of students who may participate in the AA-MAS. This assumes that the state has identified the likely AA-MAS participants through a systematic data-based process that involves analysis of current test-taking patterns and outcomes (see Hess, McDivitt, & Fincher, 2008; Lazarus & Thurlow, in press; Perie, 2008; Quenemoen, 2009; Thurlow, 2008). The needs and characteristics of the students will inform your decisions about the ALDs, and help policymakers articulate the assumptions and rationale for any proposed ALDs. Here are a set of questions to help identify and articulate underlying assumptions and rationale for AA-MAS ALD development or improvement. As Step 1 assumes, it is best to involve stakeholders who know the students, the content, and the assessment design opportunities and constraints in a study group format to answer the questions. This is especially important for developing descriptors for a different achievement standard than that used for most students, like the AA-MAS. This discussion should be informed by evidence and data that incorporate understanding of opportunities to learn, even if the data come from other states or research studies. This will ensure that historical limited opportunities to learn are not reinforced by assumptions that current achievement is all that can be expected. Policymakers should guide the discussion to focus on what to expect when students have received appropriate instruction in the content to be assessed. The following questions can guide the work of your study group:

A resource to consider for the stakeholder study group is the Perie (2008) white paper on AAMAS, and the white paper chapter on identifying the students (Quenemoen, 2009). Section I (Table 9) of the tool template in Appendix C can be used to summarize your findings. Table 9. Section I of Tool Template

Step 3 Once the stakeholder study group comes to consensus on the summary needs and characteristics and evidence to support the assertions, the third step is to identify specific rationales for differences between ALDs for general assessment and AA-MAS. Decisions on how the descriptors differ should reflect stakeholder consensus, and rationales should clearly track back to the summary of student needs and characteristics in the tool (see Table 9). For example:

You can use the categories of potential changes that are used in this study (i.e., content, context, degree, application) or you can use terms that are commonly used in your state (e.g., depth of knowledge, cognitive complexity, difficulty, etc.). Step 4 The fourth step is to use the tools provided in Appendix C and the examples below to articulate the summary of AA-MAS student needs and characteristics (see Section I), the general assessment ALDs (see Section II, Column 1), the rationale for any changes proposed for these students (Section II, Column 2), and then either development of or comparison to AAMAS ALDS (Section II, Column 3). Check these drafts for consistency with the consensus statements of your stakeholder group and the specific student needs and characteristics. As you work, capture areas of concern or questions for curriculum, assessment, and special education partners to address. Focus first on the proficient descriptors. After they are complete, move to the other levels to ensure a logical connection between the general assessment and AA-MAS and within each assessment. It may be helpful for the meeting facilitators to complete this summary work on the tools during a break such as lunch or between meeting days or times. This transfer of consensus statements to the tool template should be an opportunity to consolidate key issues and ideas in a format that makes the work more focused. If the facilitators transfer the discussion summaries into a final working tool, be sure to allow the meeting participants to check the accuracy of the summaries prior to your final working session. Regardless of the changes made and rationales for the differences, test developers should use comparable formats for achievement level descriptors that differ from those on the general assessment. Parents and teachers should be able to see exactly what is the same and what is different when the general assessment proficient descriptor is side by side with the modified achievement descriptor. Ideally, developers would include the justifications for why they are different, to inform parents and teachers of the specific purpose and ramifications of the differences.

Top of page | Table of Contents Evaluating Differences Between the General Assessment and AA-MAS ALDsUsing Section II of Tool Template with Existing AA-MAS ALDs In order to evaluate the differences between the general assessment achievement level descriptors and the AA-MAS achievement level descriptors, it is important to begin with the ALD texts and match up the language used for each grade level and subject area. Placing these ALD texts in the appropriate columns permits examination of the texts side-by-side. In this comparison process, it may be difficult to match the ALDs precisely. For instance, the skills may be listed in different order. In that case, a process of elimination may be followed to match up each ALD text. It may also be difficult to ascertain whether texts are paraphrases or distinctly different terminology reflecting actual differences. Team members categorizing the ALD texts should make note of their own decision rules as well as areas of questions or issues that arise for further discussion. Appendix B has examples of both decisions rules and issues found by our project staff, but are meant as examples only. In Table 10, the first steps of aligning the texts in Columns 1 and 3 have been completed, using examples from Appendix A. A next step not illustrated here is to evaluate the rationale for the differences detected between the general assessment and AA-MAS ALD texts. Specific rationales are not fully developed in the example because we do not know the rationale used by the states we studied.

Note: The content area and grade varies in this example. The proficient level is used in all examples.

Using Section II of Tool Template to Develop New AA-MAS ALDs In order to develop new achievement level descriptors for the AA-MAS, the beginning point is to place the existing ALD text from the general assessment into the tool in the appropriate column. The team would provide proposed changes in the center column, along with a specification of the rationale for these differences. Rationales should include what is known about the students identified as appropriately participating in the AA-MAS, and whether student characteristics suggest differences in content (what is being taught), degree (how well or how much is to be known), application (how it is to be demonstrated), and context (under what conditions it is to be demonstrated). Another rationale element could include consideration of what barriers students who may participate in the AA-MAS would encounter in demonstrating what they know and can do. Further considerations reflected in the rationale may reflect whether the affected students have been provided opportunities to receive the same instructional content as other students, and the implications if they have not been. Review team members should consider how these ALDs relate to other grade levels from earlier and later in students’ learning and assessment. It is important to reflect on what the differences imply for interpreting test results of the AA-MAS. After these differences are identified, and rationales are specified, the formulation of the wording of the AA-MAS ALDs is entered. Table 11 shows examples in Column 1 from Appendix A in order to demonstrate the use of the tool. A next step not completed here is to describe proposed changes and the rationale for the differences detected between the general assessment and AA-MAS ALD texts. As the changes and rationales are proposed, development team members must determine whether they are defensible based on what is understood about the students who may participate.

A final procedural step for state assessment staff and the state vendors will include studying the alignment of the assessment itself and the proposed ALDs. As mentioned in the opening section, experts differ on whether ALDs should be developed before test development or after. Depending on which approach to ALD development is taken, states have an obligation to ensure that the assessment and the ALDs are aligned. For example, if the assessment is developed first, or an existing general assessment is “modified” in some way and then the ALDs are written, the type of changes to the ALDs should be consistent with the design, revisions, and enhancements made to the assessment. If the assessment is developed or “modified” after the ALDs are drafted, the types of changes in the ALDs should drive the types of revisions and enhancements made to the assessment. And, once the final AA-MAS form is created, the ALDs should be compared to what students are actually expected to show they know and can do on the assessment. This will ensure a strong alignment between the two. For example, the ALDs should not include references to scaffolding (e.g., segmented texts) or other contextual features that are not provided in the assessment.

Tips for Tailoring Use of the Tools to Specific State Contexts and Stakeholder Teams The use of these tools and procedures can support high expectations and improved outcomes for students who may participate in an AA-MAS. Engaging key stakeholders with varied perspectives and expertise in the process of building the rationale for the assessment can ensure these outcomes. Such interdisciplinary teaming is powerful but challenging, and it may take time and group discussion to ensure that varied perspectives are understood and considered. Through use of the example ALD comparisons in Appendix A, the team can use neutral text from another state to develop understanding of the tool and process. There are several benefits from a stakeholder team working together on a tryout of the tool using another state’s example from Appendix A. First, the example allows content experts and special educators to discuss in theory what changes are defensible to ensure these students can show what they know but still maintain the integrity of the intended content, before applying it to their own state example. This allows the discussion to be initiated in a nonthreatening way. A tryout of the tools using an example from Appendix A also can identify procedural choices that will work well when using actual state ALDs. Content experts on your team can identify the terms and definitions to use for your categories to replace or refine the ones used here (i.e., content, context, application, degree). Special educators on your team will be able to consider how the needs and characteristics of the students affect their learning and demonstration of content from the examples. Based on the try out and discussions, the tool can be modified to reflect the specific context in your state. The examples provided in Appendix A represent early work on development of AA-MASPLDs. Since that time, many states have developed new ways of thinking about the issues related to content coverage at grade level and difficulty or complexity. These include concepts like embedded use of scaffolds (e.g., timelines, graphic organizers) to organize information, shorter segmented reading passages, or use of reminders of the key problem solving steps in mathematics. As peer review continues on state submissions, it will be important to identify and make use of examples from publicly available PLDs from states that receive approval for their AA-MAS. These new examples may provide additional ideas for your stakeholder groups as you work to build an AA-MAS that meets the regulatory requirements and that can help improve the achievement of students who participate in the assessment option.

Top of page | Table of Contents ReferencesAlbus, D., Lazarus, S. S., Thurlow, M. L., & Cormier, D. (2009). Characteristicsof states’alternate assessments based on modified academic achievement standards in 2008 (Synthesis Report 72). Minneapolis, MN: University of Minnesota, National Center on Educational Outcomes. Cizek, G. J. (2006). Standard setting. In S. M. Downing & T. M. Haladyna (Eds.), Handbook of test development (pp. 225-258). Mahwah, NJ: Lawrence Erlbaum. Council of Chief State School Officers. (2003). Glossary of assessment terms and acronyms. Washington, DC: Author. Crane, E. W., & Winter, P. C. (2006). Setting coherent performance standards. Washington, DC: Council of Chief State School Officers, Technical Issues in Large-Scale Assessment SCASS. Filbin, J. (2008). Lessons from the initial peer review of alternate assessments based on modified achievement standards. Washington, DC: U.S. Department of Education, Office of Elementary and Secondary Education. Haertel, E. H. (2008). Standard setting. In K.E. Ryan, & Shepard, L.A. (Eds.), The future of test-based educational accountability. New York: Routledge. Hambleton, R. K. (2001). Setting performance standards on educational assessments and criteria for evaluating the process. In G. J. Cizek (Ed.), Setting performance standards: Concepts, methods, and perspectives (pp. 89-116). Washington, DC: U.S. Department of Education. Hess. K., McDivitt, P. & Fincher, M. (2008, June). Who are those 2% students and how do we design items that provide greater access for them? Results from a pilot study with Georgia students. Paper presented at the 2008 CCSSO National Conference on Student Assessment, Orlando, FL. Retrieved from http://www.nciea.org/publications/CCSSO_KHPMMF08.pdf Lazarus, S. S., & Thurlow, M. L. (in press). The changing landscape of alternate assessments based on modified academic achievement standards (AA-MAS): An analysis of early adopters of AA-MAS. Peabody Journal of Education. Lazarus, S. S., Thurlow, M. L., Christensen, L. L., & Cormier, D. (2007). States’ alternate assessments based on modified achievement standards (AA-MAS) in 2007 (Synthesis Report 67). Minneapolis, MN: University of Minnesota, National Center on Educational Outcomes. Perie, M. (Ed.) (2009a). Considerations for the alternate assessment based on modified achievement Standards (AA-MAS): Understanding the eligible population and applying that knowledge to their instruction and assessment. Washington, DC: U.S. Department of Education. Perie, M. (2009b). Developing modified achievement level descriptors and setting cut scores. In M. Perie (Ed), Considerations for the alternate assessment based on modified achievement Standards (AA-MAS): Understanding the eligible population and applying that knowledge to their instruction and assessment (pp. 235-266). Washington, DC: U.S. Department of Education. Perie, M. (2008). A guide to understanding and developing performance level descriptors. Educational Measurement: Issues and Practice, 27(4) 15-29. Perie, M., Hess, K, & Gong, B. (2008). Writing performance level descriptors: Applying lessons learned from the general assessment to alternate assessments based on alternate and modified achievement standards. Dover, NH, National Center for the Improvement of Educational Assessment. Available at www.nciea.org. Porter, A. C., & Smithson, J. L. (2002). Alignment of assessments, standards, and instruction using curriculum indicator data. Available at: cep.terc.edu/dec/research/alignPaper.pdf Quenemoen, R. (2009). Considering why and whether to assess students with an alternate assessment based on modified achievement standards. In M. Perie (Ed), Considerations for the alternate assessment based on modified achievement Standards (AA-MAS): Understanding the eligible population and applying that knowledge to their instruction and assessment (pp. 1750). Washington, DC: U.S. Department of Education. Rothman, R., Slattery, J. B., Vranek, J. L., & Resnick, L. B. (2002). Benchmarking and alignment of standards and testing. National Center for Research on Evaluation, Standards, and Student Testing. Available at: www.cresst.org/ Thurlow, M. L. (2008). Assessment and instructional implications of the alternate assessment based on modified academic achievement standards (AA-MAS). Journal of Disability Policy Studies, 19(3), 132-139. U.S. Department of Education (2007a, April 9). Final Rule 34 CFR Parts 200 and 300: Title I—Improving the Academic Achievement of the Disadvantaged; Individuals with Disabilities Education Act (IDEA). Federal Register. 72(67), Washington DC: Author. Available at: www.ed.gov/admins/lead/account/saa.html#regulations U.S. Department of Education (2007b, July 20), Modified Academic Achievement Standards: Non-regulatory Guidance. Washington, DC: Office of Elementary and Secondary Education, U.S. Department of Education. Available at: www.ed.gov/admins/lead/account/saa.html#regulations. U.S. Department of Education (2007c, December 21). Standards and Assessment Peer Review Guidance: Information and Examples for Meeting Requirements of the No Child Left Behind Act of 2001. Washington, DC: Office of Elementary and Secondary Education, U.S. Department of Education. Available at: www.ed.gov/policy/elsec/guid/saaprguidance.pdf Wakeman, S., Flowers, C., & Browder, D. (2007). Aligning alternate assessments to grade level content standards: Issues and considerations for alternates based on alternate achievement standards (Policy Directions 19). Minneapolis, MN: University of Minnesota, National Center on Educational Outcomes. Webb, N. L. (1999). Alignment of science and mathematics standards and assessments in four states (Research Monograph No. 18). Madison, WI: University of Wisconsin-Madison. Zieky, M., Perie, M., & Livingston, S. (2008). Cutscores: A manual for setting performance standards on educational and occupational tests. Princeton, NJ: Educational Testing Service.

Top of page | Table of Contents Appendix ASide-By-Side Tables of Achievement Level Descriptors for Grade-Level and Modified Assessments The tables contained in this appendix are organized to present the process by which the ALDs from the four states were analyzed, and as examples for state team training and tryouts of procedures. We ordered the subjects alphabetically, and the grade levels sequentially, so that grade 4 math precedes grade 4 reading, and grade 4 reading precedes grade 8 reading, for instance. We organized each of the states’ ALDs at each subject area and grade level before proceeding to the next subject area and grade level. The purpose for this decision was so that readers can consider different ways that each subject and grade was approached, and to ease comparison among these approaches. In this project, we decided to focus on proficient level descriptors. Although there is value in comparing the other achievement levels to one another, it is beyond the scope of this report. For each state there are two tables by subject (Reading and Math) for each of the three grade levels (4, 8, and 10). The first of the pair is the full text of the achievement level descriptors for the grade-level assessment and the modified assessment. The texts are shown as written by the states, with some variations to remove state-specific terminology. The differences that we identified between the two texts are placed in bold italics, for ease of readers’ recognition. The second table of the pair shows the individual differences between the texts and how the differences were categorized: content, degree, application, and context. A few other conventions were employed with the second table of each pair. Ellipses indicate that the wording was part of a larger sentence of text. Some phrases were included for clarification, although they did not differ between the texts. When this was done, the words that were not different were placed in brackets. This was commonly done with degree difference, in order to clarify to what the qualifying word was modifying, as in: “often [justify]” versus “sometimes [justify].” In some cases, a phrase represented more than one category of difference, so the relevant word was underlined to show in the table which word was being identified with which type of difference. Finally, in some cases an ALD appeared in one text and not the other; that is, in the grade-level and not the modified, or vice-versa. When this occurred, the term “[absent]” was applied to show that the specificALDs were not in the text, whether grade-level or modified. These examples of states’ ALDs are not attributed to a specific state, but are listed by a numeral, and randomized in order. This decision was made to focus on the analytic process and not the specific state ALD decisions for the purpose of this report. Table A-1. Categories used for comparing and contrasting ALDs in tool development

Table A-2a. State 1

Table A-2b. State 2

Table A-4c. State 3

Table A-4d. State 4

Table A-5. Reading, Grade 8 Table A-5a. State 1

Table A-5b. State 2

Table A-5c. State 3

Table A-5d. State 4

Top of page | Table of Contents Appendix BAchievement Level Descriptor Analysis Decision Rules

Other Points to Discuss: This section provides examples from Appendix A that were unclear to the research team, but that probably can be resolved by people who deeply understand their content standards. On the other hand, identifying questions like this helps point out to evaluators or developers where more explanation or specific language is needed. • In State 2 4th grade math, the AA-MAS PLD text reads: The student is usually accurate when reading and plotting points in the first quadrant of a coordinate grid [Note: The related GLAS PLD text reads: “Fourth grade students will demonstrate knowledge and skills in . . . first quadrant coordinate grids”] Issue: Is the “reading and plotting points” a specific skill which narrows the set of skills, making it an application difference from the GLAS, or is the “when reading and plotting points” a unique contextual condition around the topic area of coordinate grids? [Comment: we think it is the former, but considering the possibility of it being the latter instead] • In State 2 4th grade math, the AA-MAS PLD text reads: The student uses some problem-solving techniques to accurately solve real-world applications of the statistical measures (minimum and maximum value, range, mode, median, and mean) [Note: The related GLAS PLD text reads: “Fourth grade students will demonstrate knowledge and skills in . . . application of the statistical measures (minimum and maximum value, range, mode, median, and mean)”] Issue: Is the “real-world applications” framing a specific type of application, and if so, is that a content or context difference? [Comment: we think it is the latter, but considering the possibility of it being the former instead] • In State 2 4th and 8th grade math, the AA-MAS PLD text reads: A student scoring at the meets standard level usually performs consistently and accurately when working on grade-level mathematical tasks based on modified achievement standards for eligible students with an IEP which includes.• reduced cognitive load on grade level• increased visual representations• simplified reading and sentence structure. [Note: The related GLAS PLD text reads: “A student scoring at the proficient level is likely to perform at all cognitive levels on many elements of the four areas of emphasis.”] Issue: Is the segment on “grade-level mathematical tasks based on modified achievement standards” considered both content and context, since it specifies tasks yet also indicates conditions that narrow the content? [See also example below on [state] 4th grade reading for similar but different issue to contrast with this question] • In State 2 4th and 8th grade reading, the GLAS PLD text reads: When independently reading grade-appropriate . . . text, a proficient student has satisfactory comprehension . . . [Note: The related AA-MAS PLD text reads: “When reading grade appropriate . . . text, a meets standard student has satisfactory comprehension when using modified achievement standards for eligible students with an IEP . . .”] Issue: Is the term “independently” considered application in that independent reading is a specific type of reading, or is it considered context because it is a condition around which the reading is being accomplished? [Comment: we think it is the former, but considering the possibility of it being the latter instead]

In fourth grade, students identify, predict, and describe the results of transformations of plane figures. [Note: The related GLAS PLD text reads: “Students use coordinate planes to describe the location and relative position of points.”] Issue: Is the clause “identify, predict, and describe” considered application due to their being parts or skills within the umbrella content of planes, or is it content because each of the skills is a separate content area? [Comment: we think it is the former, but considering the possibility of it being the latter instead] • In State 4 4th grade math, the AA-MAS PLD text reads: They use the order of operations to verify and translate mathematical relationships with symbols, words, numbers, and pictures. [Note: The related GLAS PLD text reads: “Students generally can use the order of operations or the identity, commutative, associative, and distributive properties.” Issue: Is the phrase “with symbols, words, numbers, and pictures” considered application or context? [Comment: we think it is the latter, but considering the possibility of it being the former instead] • In State 4 8th grade math, the GLAS PLD text reads: [Proficient level] students consistently show a proficient level of understanding of real numbers including irrational numbers. [Note: The related AA-MAS PLD text reads: “In grade eight, students are exposed to and show basic proficiency in the following concepts: develop the concept of and make estimates with irrational numbers.”] Issue: It is unclear as to how to conceptualize the phrase “real numbers including irrational numbers.” That is, the MAS text doesn’t clarify that irrational numbers might assume students could show understanding with real numbers—so maybe there is a difference in texts because the GLAS specifies both? Perhaps students would understand real numbers before making estimates with irrational ones? [Comment: While uncertain, we considered this a content difference.] • In State 4 8th grade reading, the AA-MAS PLD text reads: Students compare and contrast elements within text to make meaning based on evidence. [Note: The related GLAS PLD text reads: “They use context clues to identify and define unknown words and compare and contrast related concepts.” Issue: Is the phrase “within text” considered application because it pertains to the application of a skill (compare and contrast) in a particular way, or is it context because it places conditions around the way in which the skill (compare and contrast) is accomplished? • In State 3 4th grade reading, the AA-MAS PLD text reads: Students scoring at the Satisfactory level typically read and comprehend grade-levelmodified reading material and will: . . . recognize and interpret cause and effect, sequence, and compare/contrast [Note: The related GLAS PLD text reads: “Students scoring at the Satisfactory level typically read and comprehend grade-level reading material and will: . . . recognize and interpret relationships in narrative and expository text to include cause and effect, sequence, and compare/contrast”] Issue: The GLAS is missing the specific types of texts; is this a content difference because the MAS may have different content than the GLAS [either less content and a narrowing of the curriculum] or is it the same content? Or is this a context difference because the MAS may be narrowing the text types that the GLAS doesn’t? Or might the content be the same because we do not know the nature of “grade level modified reading material” in relation to “grade level” reading material? [Comment: we think it is the former, but considering the possibility of it being the latter instead] • In State 1 4th grade and 8th grade math there is the following difference: GLAS PLD text: Satisfactory performance; at or above state passing standard; sufficient understanding of the mathematics [state] curriculum AA-MAS PLD text: Satisfactory performance; at or above modified passing standard; sufficient understanding of the mathematics [state] curriculum measured at this grade level Issue: Is the use of the word “modified” in referring to the passing standard for the AAMAS a difference not worth noting, or is this a context difference? [Comment: we think it is the latter, but considering the possibility of it being the former instead] NOTE: In [state], some content was noted as not being assessed using multiple choice items. Given the current design, there are other MM (Multiple Measure) items considered as field test items under development for potential future use. It is unclear if the content is intended to be measured using MM items instead, but if it is they may not be counted now as part of the regular assessment. Top of page | Table of Contents Appendix C

Procedures and Tools to

Evaluate or Develop AA-MAS ALDs Step 1: Identify, recruit, and train policymakers and stakeholders who will serve as advisors, and convene them in a virtual or face-to-face meeting. Step 2: Identify the needs and characteristics of students who may participate in the AA-MAS. With a stakeholder group, discuss these questions:

Step 3: Identify specific rationales for differences between ALDs for general assessment and AA-MAS. Decisions on how the descriptors should differ should reflect stakeholder consensus, and rationales should clearly track back to the answers to the study group questions. For example:

NOTE: You can use the categories of potential changes that are used in this study (i.e., content, context, degree, application) or you can use terms that are commonly used in your state (e.g., depth of knowledge, cognitive complexity, difficulty, etc.). Step 4: Use the tool template to analyze and summarize student needs and characteristics for AA-MAS (Section I), the general assessment ALDs (Section II, Column 1), the rationale for any changes proposed for these students (Section II, Column 2), and then either evaluation or development of MAS ALDS (Section II, Column 3). Check these drafts for consistency with the consensus statements of your stakeholder group. Top of page | Table of Contents Tool Template to Evaluate or Develop ALDs for AA-MASSection I

Section II: Comparisons and Rationales for Changes to General Assessment ALDs

|

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||